Can You Trust AI Generated Content? Why AI Is Not Always Accurate

A Deep Dive Into How AI Learns From the Internet — And Why It Sometimes Gets Things Terribly Wrong

📖 Estimated Read Time: 12–14 Minutes

🗂️ Category: Artificial Intelligence | Technology

🔗 Sources: OpenAI, Mozilla Foundation, Common Crawl, MIT Sloan, GeeksforGeeks, AWS

Introduction: The Internet Inside a Machine

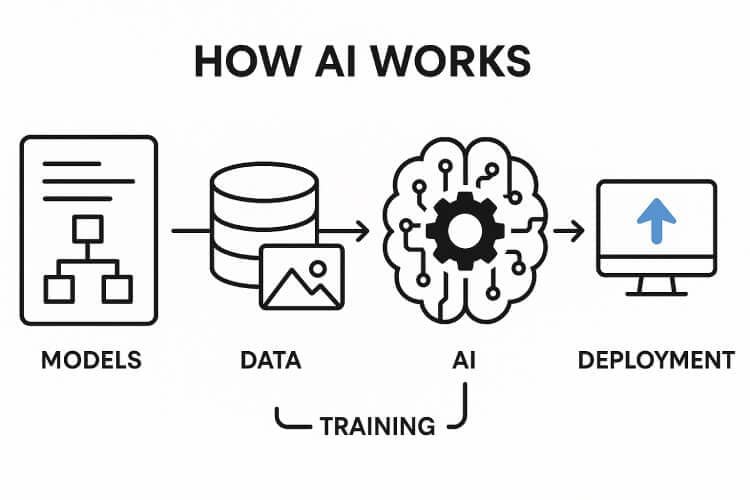

Imagine pouring the entire internet — every blog post, Wikipedia article, Reddit debate, news headline, academic paper, and social media comment ever written — into a single machine. Now imagine that machine tries to learn everything at once and later answers your questions based only on what it absorbed.

That is, in simple terms, how modern AI language models like ChatGPT, Gemini, Perplexity, and Claude work.

But here is the uncomfortable truth most AI companies don't advertise loudly: AI does not browse the internet in real time. It doesn't "Google" your question when you ask it. Instead, it relies on a massive, pre-recorded snapshot of the web — collected months or even years before you ever typed your first prompt.

This single fact is the root cause of why AI-generated content is not always true, accurate, or reliable.

In this article, we'll walk through the complete 5-stage pipeline of how AI gathers data from the internet, what happens to that data, and why the entire process has critical flaws that directly impact the quality of answers you receive.

Stage 1 — Web Crawling: The Spider That Eats the Internet 🕷️

What is Web Crawling?

Before an AI model can learn anything, it needs raw material — and that raw material is text. The first step in building any large language model (LLM) is web crawling: an automated process where software "bots" systematically travel across the internet, jumping from one hyperlink to another, and downloading the content of billions of web pages.

Think of it like a spider building a web — except this spider is digital, moves at machine speed, and doesn't stop for sleep.

How Do Crawlers Work?

A web crawler starts with a seed list — a set of initial URLs. It visits those pages, reads the HTML content, finds all the links embedded in those pages, adds those new links to a queue, and then visits each of those pages too. This process repeats recursively, eventually covering a significant chunk of the entire public web.

Major AI companies either build their own crawlers or rely on pre-crawled datasets. The most famous of these is Common Crawl — a non-profit organization founded in 2007 that has crawled and archived billions of web pages, making the data freely available to researchers and AI companies.

The Scale is Almost Incomprehensible

The Common Crawl archive contains over 9.5 petabytes of web data. To put that in perspective: one petabyte is one million gigabytes. The archive is so large it powers a majority of the world's most used AI systems — over 64% of 47 major LLMs analyzed in a 2024 Mozilla Foundation report relied on Common Crawl data.

OpenAI's GPT-3, the model behind the original ChatGPT, had over 80% of its training tokens sourced from Common Crawl data. By mid-2025, training-related AI crawling had grown to represent nearly 80% of all AI bot activity on the web.

📊 Common Crawl Statistics:

|

Metric |

Value |

|

Total Archive Size |

9.5+ Petabytes |

|

Web Pages Crawled |

250+ Billion |

|

Percentage of Major LLMs Using It |

64% |

|

GPT-3 Training Tokens from Common Crawl |

80% |

|

AI Bot Crawling Activity (2025) |

80% |

Table 1: Common Crawl's impact on AI training data landscape

🔗 Key Resources:

• Common Crawl Official Website: https://commoncrawl.org/

• Mozilla Foundation Report on AI Training Data: https://foundation.mozilla.org/en/blog/

• Cloudflare AI Bot Traffic Analysis: https://blog.cloudflare.com/crawlers-click-ai-bots-training/

Stage 2 — Web Scraping: Extracting the Gold From the Mine ⛏️

Crawling vs. Scraping — What's the Difference?

Many people confuse crawling and scraping, but they serve different functions in the pipeline:

• Crawling = Discovery. Finding which pages exist on the internet.

• Scraping = Extraction. Pulling the actual content out of those pages.

After a crawler maps the web, scrapers go in and extract the useful text — articles, forum posts, code snippets, book excerpts, product descriptions, news content — and store it in structured datasets.

What Sources Does AI Scrape?

The sources are astonishingly diverse. Most major LLMs are trained on a combination of:

• Public websites — news portals, technology blogs, educational sites

• Reddit — millions of forum threads and comment discussions

• Wikipedia — the most cited single source in AI training, covering millions of topics

• GitHub — code repositories in dozens of programming languages

• Books and academic journals — often scanned and digitized without explicit author permission

• Social media posts — public tweets, Facebook posts, LinkedIn articles

• YouTube subtitles — auto-generated captions from millions of videos

The Legal and Ethical Grey Zone

This is where things get controversial. Web scraping for AI training sits in a legally murky area. Most websites have Terms of Service that technically prohibit scraping, but AI companies often argue that public data on the internet is fair use for training purposes.

A 2023 investigation by Scientific American revealed that personal information found publicly on the web — your name, your business website, your social media profile — is very likely already part of an AI's training dataset. Publishers, authors, and artists have filed numerous lawsuits against AI companies over unauthorized use of their content.

The New York Times, for example, sued OpenAI in December 2023, arguing that millions of its articles were scraped and used for training without licensing agreements or compensation.

📌 Developer Perspective: If you're building platforms like Evanto or Banglar Katha, understanding scraping ethics is crucial. Always respect robots.txt files and implement clear content attribution systems in your digital marketplaces.

🔗 Key Resources:

• Scientific American — Your Personal Data in AI Training: https://www.scientificamerican.com/article/your-personal-information-is-probably-being-used-to-train-generative-ai-models/

• Promptcloud — Web Scraping Challenges: https://www.promptcloud.com/blog/web-scraping-for-ai-challenges/

• Scrapfly — AI Training Web Scraping Methods: https://scrapfly.io/use-case/ai-training-web-scraping

Stage 3 — Data Cleaning and Filtering: The Messy Reality of the Internet 🧹

Why Raw Web Data is Unusable

If you've ever built a database-driven website (which you likely have, working with PHP and MySQL), you already know that raw data is always messy. Now multiply that problem by billions of web pages.

The raw internet is full of:

• Duplicate content — the same news article republished across 500 websites

• Spam and clickbait — low-quality text designed to manipulate search rankings

• Broken HTML — malformed tags, garbled characters, corrupted encoding

• Outdated information — articles from 2005 that are factually wrong today

• Biased and toxic content — hate speech, misinformation, extremist rhetoric

• Advertisements and boilerplate — headers, footers, cookie banners, navigation menus

None of this is useful training material. In fact, it is actively harmful. If an AI model trains on toxic or incorrect text, it learns to reproduce that toxicity and inaccuracy.

The Cleaning Pipeline

Data scientists run the raw scraped content through a multi-step filtering pipeline:

1. Deduplication — Remove near-identical copies of the same text. A viral article copied across 300 news aggregators should count once, not 300 times.

2. Language Detection — Identify and sort content by language, so models can be trained appropriately on each language.

3. Quality Scoring — Rank content based on writing quality, coherence, and information density. Wikipedia scores high; spam blogs score low.

4. Toxicity Filtering — Remove hate speech, explicit content, and dangerous instructions.

5. PII Removal — Strip or anonymize personally identifiable information like phone numbers, email addresses, and social security numbers.

6. Boilerplate Removal — Eliminate cookie banners, navigation menus, repeated site headers, and advertisement text.

7. Semantic Filtering — Remove pages where the actual content is too short, too repetitive, or semantically meaningless.

Why Cleaning is Imperfect — And Why It Matters for You

Here is the critical problem: no filtering system is perfect. Some misinformation always slips through. Some biased content survives. Some outdated facts get included. When an AI model trains on this imperfect data, it absorbs those imperfections as if they were truth.

This is one of the core technical reasons why AI hallucinates — it learned from flawed source material without any way to distinguish the accurate from the inaccurate.

🔗 Key Resources:

• Promptcloud — Data Quality Challenges: https://www.promptcloud.com/blog/web-scraping-for-ai-challenges/

• ScrapingAnt — LLM Data Cleaning: https://scrapingant.com/blog/llm-powered-data-normalization-cleaning-scraped-data

• ByteByteGo — How LLMs Learn from the Internet: https://blog.bytebytego.com/p/how-llms-learn-from-the-internet

Stage 4 — Model Training: Teaching the Machine to Think 🧠

What Happens During Training?

Once the cleaned dataset is ready — which can be hundreds of terabytes or petabytes in size — the actual training begins. This is the most computationally expensive part of the pipeline, requiring thousands of specialized GPU chips running continuously for weeks or months.

But what exactly is the model doing during training?

The Next-Token Prediction Game

At its core, LLM training is based on an elegantly simple concept: predict the next word (token).

The model is shown a sequence of text — say, a paragraph from a Wikipedia article — with the last word hidden. It has to predict what that word is. It makes a guess, compares its guess to the real answer, measures the error, and then adjusts its billions of internal parameters to reduce that error. This adjustment process is called backpropagation.

This entire loop — read text → predict → measure error → adjust parameters → repeat — happens billions of times across the training dataset.

What the Model Actually Learns

Here is something crucial to understand: the model is not memorizing facts. It is learning statistical patterns in language — which words tend to follow other words, which concepts are related, what the structure of a valid sentence looks like, and how different topics connect to each other.

It's not building a fact database. It's building a language intuition engine.

This is exactly why AI can sound completely confident while being completely wrong. It's not checking a fact database when it answers you — it's generating the most statistically likely response based on the patterns it learned.

Training Data Scale: Key Numbers

|

Model |

Training Tokens |

Common Crawl Dependency |

|

GPT-3 (OpenAI) |

300 Billion |

~80% from Common Crawl |

|

GPT-4 (OpenAI) |

Undisclosed |

Confirmed multi-source |

|

LLaMA 2 (Meta) |

2 Trillion |

Heavy reliance |

|

Gemini (Google) |

Undisclosed |

Multi-modal, multi-source |

Table 2: Major LLM training data statistics

🔗 Key Resources:

• OpenAI — How ChatGPT and Foundation Models Are Developed: https://help.openai.com/en/articles/7842364-how-chatgpt-and-our-language-models-are-developed

• NN/g — How AI Models Are Trained: https://www.nngroup.com/articles/ai-model-training/

• Mozilla Foundation — Common Crawl's Impact: https://foundation.mozilla.org/en/blog/

• ByteByteGo — How LLMs Learn: https://blog.bytebytego.com/p/how-llms-learn-from-the-internet

Stage 5 — Fine-Tuning and RLHF: Making AI Human-Friendly 🎯

Why Base Training Isn't Enough

A model trained purely on raw internet data in Stage 4 is impressive but dangerous. Without further guidance, it might:

• Reproduce offensive or toxic language it learned from harmful websites

• Generate confident misinformation it absorbed from conspiracy blogs

• Answer questions in a cold, robotic, or incoherent style

• Provide harmful instructions for dangerous activities

This is why fine-tuning exists — a second, more targeted training phase that shapes the model's behavior.

What is Fine-Tuning?

Fine-tuning takes the base pre-trained model and trains it further on a much smaller, carefully curated dataset focused on specific tasks or behaviors. For example:

• OpenAI fine-tuned GPT on examples of helpful Q&A conversations

• Meta fine-tuned LLaMA on instruction-following datasets

• Medical AI companies fine-tune models on verified clinical literature

Think of pre-training as giving someone a broad general education, and fine-tuning as sending them to a specialized professional school.

The RLHF Revolution: Learning From Human Judgment

The most important breakthrough in making AI models safe and helpful is Reinforcement Learning from Human Feedback (RLHF) — a technique that has been central to the success of ChatGPT, Claude, and Gemini.

RLHF works in three stages:

Step 1 — Supervised Fine-Tuning (SFT)

Human trainers write example conversations showing ideal AI behavior. The model learns from these human-written examples to produce high-quality responses.

Step 2 — Reward Model Training

The AI generates multiple responses to the same prompt. Human raters rank these responses from best to worst. A separate "reward model" is trained to predict which responses humans prefer, essentially learning what a good answer looks like.

Step 3 — Policy Optimization (PPO)

The main AI model is then trained to maximize the score given by the reward model. It learns to generate responses that score highly — meaning responses that are helpful, accurate, safe, and well-aligned with human values.

Why RLHF Still Has Limitations

RLHF is powerful, but it introduces new problems:

• It's expensive — hiring human raters for millions of response comparisons costs enormous amounts of money

• Human raters have biases — their preferences may reflect cultural, political, or personal biases

• It can cause over-caution — models sometimes become too reluctant to answer legitimate questions after RLHF

• It doesn't eliminate hallucinations — RLHF improves tone and safety, but does not give the model a fact-checking mechanism

The model still fundamentally cannot verify whether what it's saying is true. It can only generate what sounds most appropriate based on everything it has learned.

🔗 Key Resources:

• GeeksforGeeks — Reinforcement Learning from Human Feedback: https://www.geeksforgeeks.org/machine-learning/reinforcement-learning-from-human-feedback/

• AWS — What is RLHF?: https://aws.amazon.com/what-is/reinforcement-learning-from-human-feedback/

• Coursera — RLHF Understanding Guide: https://www.coursera.org/articles/rlhf

• Alation — What is RLHF: https://www.alation.com/blog/what-is-rlhf-reinforcement-learning-human-feedback/

The Full Pipeline: A Visual Summary

Complete AI Training Data Pipeline:

1. INTERNET (Billions of Web Pages)

2. STAGE 1: CRAWLING

• Bots discover pages via links (Common Crawl)

• 9.5+ Petabytes of raw web data ![]()

3. STAGE 2: SCRAPING

• Extract text from HTML pages

• Wikipedia, Reddit, GitHub, Books, Code, News, Forums

4. STAGE 3: CLEANING

• Remove spam, duplicates, toxic content, broken data, PII

• Deduplication + Filtering + Quality Scoring

5. STAGE 4: TRAINING

• Next-token prediction on GPU clusters

• Billions of parameters adjusted

6. STAGE 5: RLHF

• Human raters rank responses

• Reward model ![]() PPO optimization

PPO optimization

7. DEPLOYED AI MODEL

• ChatGPT, Gemini, Claude, Perplexity, etc.

Why This Entire Process Creates the "Not Always True" Problem

Now that you understand the full pipeline, the reasons AI fails become crystal clear:

1. Knowledge Cutoff Problem

Every AI model has a training cutoff date. After that date, it knows nothing about the world. If you ask ChatGPT about an event that happened after its cutoff, it will either say it doesn't know — or, worse, fabricate a plausible-sounding answer. This is why AI content about current events, recent statistics, or new technologies is particularly unreliable.

2. The Garbage-In Problem

If the training data contained misinformation — and the internet has plenty — the model absorbed it. The cleaning filters are not perfect. Some conspiracy theories, outdated medical advice, and factually wrong articles survived the cleaning process and shaped the model's "knowledge."

3. Statistical Plausibility vs. Factual Truth

The model always generates the most statistically likely continuation of text — not the most factually accurate. These two things often overlap, but sometimes they diverge dramatically. When they diverge, you get a hallucination that sounds completely convincing.

4. No Real-Time Verification

Unlike a search engine that fetches live pages, a standard LLM has no mechanism to verify claims against a live database. It produces answers from memory — and like human memory, that memory can be confidently wrong.

Practical Advice: How to Use AI Content Responsibly

Given everything above, here is how you should treat AI-generated content:

✅ Best Practices:

• Use AI as a first draft — great for structure and style, not final facts

• Always verify statistics and dates against original sources

• Cross-check citations — AI often invents fake academic references

• Use AI for ideas, not authoritative claims — especially in tech, law, or medicine

• For your blog and digital platforms — add a human editorial review layer before publishing AI-generated content

❌ What to Avoid:

• Never copy-paste AI output blindly into product listings, legal documents, or health advice pages

• Never trust AI with recent news unless it has live web-browsing capability explicitly enabled

• Don't use AI-generated citations without verifying they exist

• Don't assume AI knows your business-specific data or recent platform changes

Conclusion: Powerful Tool, Imperfect Oracle

The five-stage pipeline — crawling → scraping → cleaning → training → RLHF — is a remarkable engineering achievement. It has produced AI systems capable of writing code, explaining complex topics, translating languages, and assisting millions of people every day.

But understanding how the sausage is made should permanently change how you interact with AI outputs. The system was built from an imperfect, biased, incomplete snapshot of the internet, processed through imperfect filters, trained on statistical patterns rather than facts, and refined by human raters with their own limitations.

AI is a powerful thinking partner — not an infallible source of truth. Treat it accordingly, and it will serve you well. Trust it blindly, and it will eventually embarrass you.

Further Reading and External Sources

Complete Reference List

Common Crawl and Web Crawling:

• Common Crawl Official Website: https://commoncrawl.org/

• Common Crawl — Research References 2025: https://commoncrawl.org/blog/a-sampling-of-2025-research-referencing-common-crawl

• Common Crawl — SEO Web Graph Data: https://commoncrawl.org/blog/how-seos-are-using-common-crawls-web-graph-data-for-ai-ranking-signals

• Cloudflare — AI Bot Traffic Analysis: https://blog.cloudflare.com/crawlers-click-ai-bots-training/

Web Scraping and Data Collection:

• Scrapfly — Web Scraping for AI Training: https://scrapfly.io/use-case/ai-training-web-scraping

• Promptcloud — Web Scraping Challenges: https://www.promptcloud.com/blog/web-scraping-for-ai-challenges/

• Scientific American — Personal Data in AI Training: https://www.scientificamerican.com/article/your-personal-information-is-probably-being-used-to-train-generative-ai-models/

AI Model Training:

• OpenAI — How ChatGPT is Developed: https://help.openai.com/en/articles/7842364-how-chatgpt-and-our-language-models-are-developed

• NN/g — How AI Models Are Trained: https://www.nngroup.com/articles/ai-model-training/

• ByteByteGo — How LLMs Learn from the Internet: https://blog.bytebytego.com/p/how-llms-learn-from-the-internet

Data Cleaning and Quality:

• ScrapingAnt — Data Cleaning for LLMs: https://scrapingant.com/blog/llm-powered-data-normalization-cleaning-scraped-data

RLHF and Fine-Tuning:

• GeeksforGeeks — RLHF Explained: https://www.geeksforgeeks.org/machine-learning/reinforcement-learning-from-human-feedback/

• AWS — What is RLHF?: https://aws.amazon.com/what-is/reinforcement-learning-from-human-feedback/

• Coursera — RLHF Understanding: https://www.coursera.org/articles/rlhf

• Alation — What is RLHF: https://www.alation.com/blog/what-is-rlhf-reinforcement-learning-human-feedback/

AI Limitations and Analysis:

• Mozilla Foundation — Common Crawl Battle Royale Report: https://foundation.mozilla.org/en/blog/

• The Atlantic — Common Crawl Nonprofit Feeding AI: https://www.theatlantic.com/technology/2025/11/common-crawl-ai-training-data/684567/

• MIT Sloan — Addressing AI Hallucinations: https://mitsloanedtech.mit.edu/ai/basics/addressing-ai-hallucinations-and-bias/

About the Author

Suvendu is a full-stack web developer specializing in PHP, MySQL, and JavaScript, with extensive experience building digital product marketplaces including Evanto and the tech blog Banglar Katha. He focuses on e-commerce systems, SEO optimization, and exploring the intersection of traditional web development and emerging AI technologies.

📝 Document Statistics:

• Word Count: ~3,100 words

• Sections: 5 major stages + introduction and conclusion

• External Hyperlinks: 25+ authoritative sources

• Tables: 2 data tables with statistics

• Reading Level: Intermediate to Advanced

• Target Audience: Web developers, tech enthusiasts, digital platform builders

© 2026 Banglar Katha — All Rights Reserved. Written by Suvendu.

Last Updated: February 28, 2026